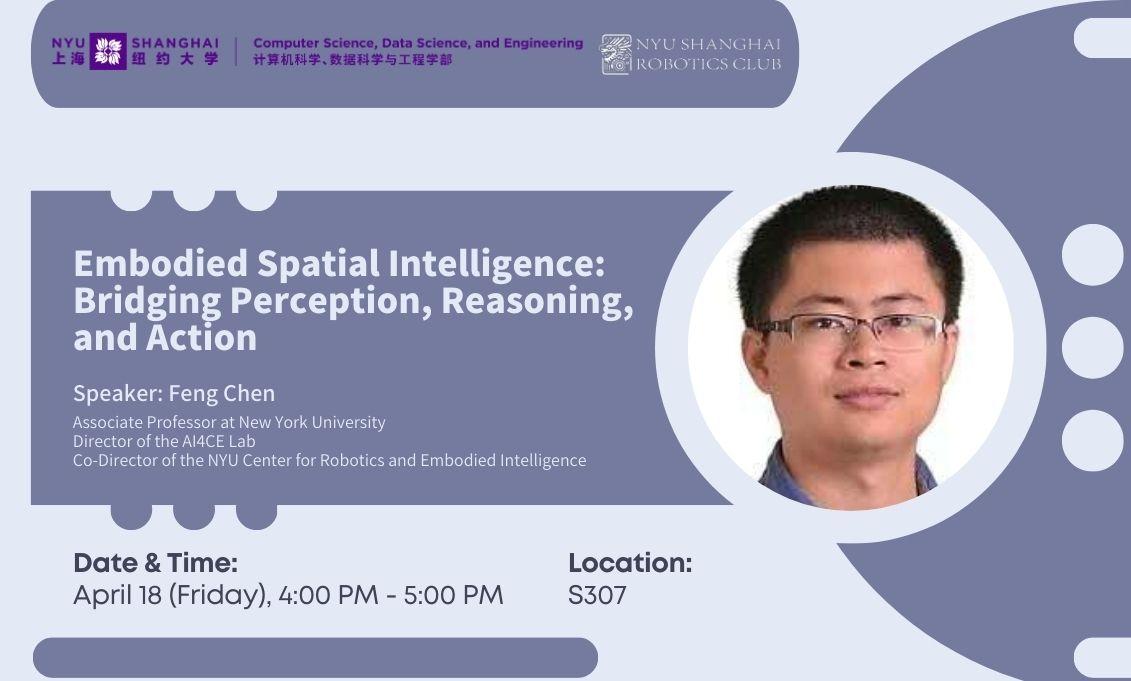

Abstract

This talk introduces how machines learn to see, understand, and interact with the world in ways similar to humans. We delve into embodied spatial intelligence, where agents build robust representations of their surroundings to navigate and complete tasks. The presentation surveys key challenges and current solutions, including building maps, exploring unknown environments, understanding human intentions, and guiding robot manipulation and navigation using videos of human activities. We will also discuss the broader impact of these advances, from self-driving and delivery to household automation and space exploration.

Bio

Chen Feng is an Associate Professor at New York University, Director of the AI4CE Lab, and Co-Director of the NYU Center for Robotics and Embodied Intelligence. His research focuses on active and collaborative robot perception and learning to address multidisciplinary, use-inspired challenges in construction, manufacturing, and transportation. He is dedicated to developing novel algorithms and systems that enable intelligent agents to understand and interact with dynamic, unstructured environments. Prior to NYU, he served as a research scientist in the Computer Vision Group at Mitsubishi Electric Research Laboratories (MERL) in Cambridge, Massachusetts, where he developed patented algorithms for localization, mapping, and 3D deep learning in autonomous vehicles and robotics. Chen Feng earned his doctoral and master's degrees from the University of Michigan between 2010 and 2015, and his bachelor's degree in 2010 from Wuhan University. As an active contributor to the AI and robotics communities, he has published over 90 papers in top conferences and journals such as CVPR, ICCV, RA-L, ICRA, and IROS, and has served as an area chair and associate editor. In 2023, he was awarded the NSF CAREER Award. More information about his research can be found at ai4ce.github.io.